Proprioceptive Sensors: The Robot's Inner Eye

How internal sensing transforms robots from machines into adaptive partners

Before a robot can understand the world around it, it must first understand itself. Proprioceptive sensors form the foundation of self-knowledge, measuring the robot's own state in real-time, from joint angles to torque, enabling every movement and decision that follows.

In biology, proprioception is the sense that tells you where your arm is without looking at it. You can close your eyes and touch your nose; that is proprioception. In robotics, the equivalent capability is just as fundamental, and arguably even more critical: a robot that cannot sense its own position, force, and velocity is, at best, a static machine; at worst, a safety hazard. This is the first installment of DataRoot Labs' ongoing series on Sensors in Robotics.

In this article, we examine proprioceptive sensors, what they are, how they work, where they are deployed, and how machine learning is transforming their role across industrial automation, surgical robotics, humanoid systems, and beyond. As robotics becomes the backbone of manufacturing, healthcare, logistics, and exploration, understanding the sensing stack is no longer only for engineers. It is a strategic intelligence priority for any organization building, investing in, or deploying robotic systems.

What Are Proprioceptive Sensors?

Proprioceptive sensors measure the internal state of a robotic system, specifically, properties of the robot's own body and mechanics, rather than properties of the external environment. The term derives from the Latin proprius (one's own) and the Greek aisthesis (sensation). In the robotics context, this translates into sensors that track joint positions, angular velocities, accelerations, torques, and the forces transmitted through a robot's structure.

CORE DISTINCTION

Proprioceptive sensors measure the robot itself. Exteroceptive sensors, cameras, LiDAR, and ultrasonic sensors measure the external environment. Together they form the complete sensing stack, but proprioception is the necessary foundation: without knowing where its limbs are, a robot cannot meaningfully interpret what it sees.

Proprioceptive sensing is the prerequisite for any form of closed-loop control. It is what transforms a pre-programmed sequence of motor commands into a genuinely reactive, adaptive system. Every industrial arm, legged robot, surgical tool, and exoskeleton depends on it.

What Do Proprioceptive Sensors Measure?

Proprioceptive sensors collectively generate a continuous stream of data describing the robot's kinematic and dynamic state. The six primary signal categories are:

- Joint Position & Angle: Angular or linear displacement of each joint, typically in degrees or radians. The foundational signal for all kinematic computations.

- Velocity: Rate of change of joint position, angular velocity (rad/s) or linear velocity (m/s). Essential for damping control and dynamic motion planning.

- Acceleration: Rate of change of velocity; detected via accelerometers and IMUs in real time. Used for inertial compensation and fall detection in legged systems.

- Torque & Force: Rotational force at joints and linear forces along links, critical for compliance control, safe human-robot interaction, and grasping feedback.

- Orientation: Full 3D orientation expressed as roll, pitch, and yaw, essential for legged robots, aerial drones, and mobile manipulators operating in unstructured environments.

- Motor Current: Electrical current drawn by actuators, a low-cost proxy for torque widely used in compact systems where dedicated force-torque sensors are not viable.

Together, these signals form what engineers call the robot's state vector, a mathematical snapshot of the system at any given moment. Control algorithms read this vector continuously, often at hundreds or thousands of hertz, to regulate motion with precision.

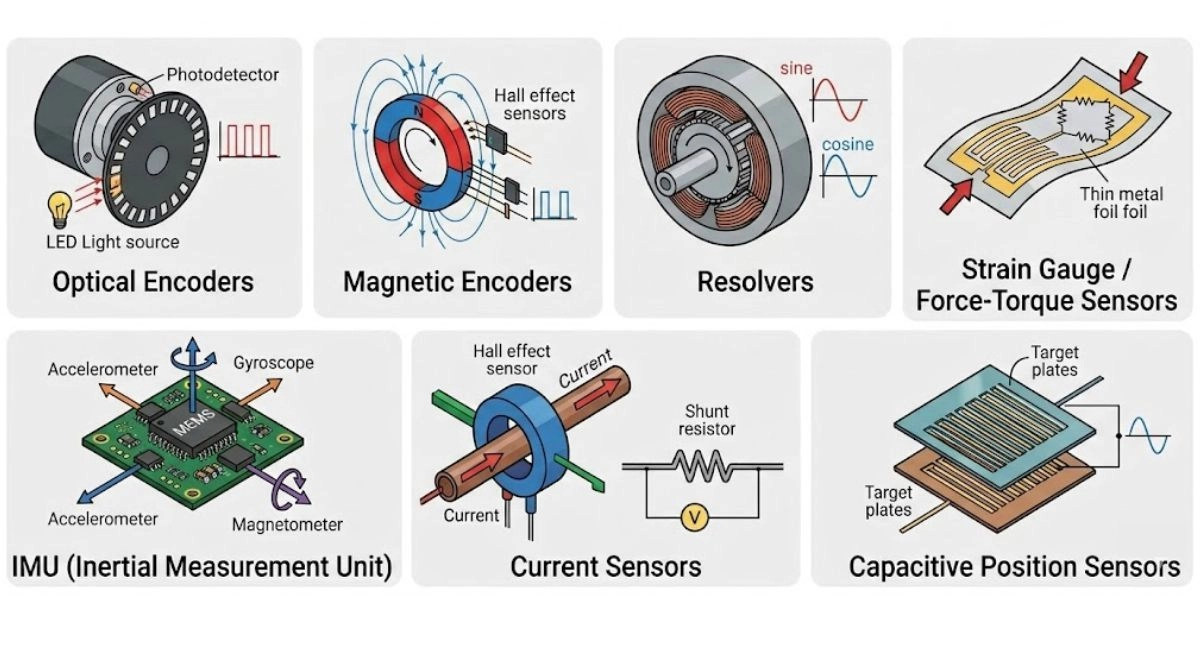

| Technology | What it measures | Typical use |

Optical Encoders | Joint angle & position via light interruption or reflection | Servo motors in industrial arms; highest accuracy class |

Magnetic Encoders | Compact joints, collaborative robots, and harsh environments | Angle via Hall-effect sensing of rotating magnets |

Resolvers | Aerospace, defense, heavy industrial, extreme reliability | Absolute angle without battery backup |

Strain Gauge / Force-Torque Sensors | Cobots, surgical robotics, assembly force control | Force & torque at joints or end-effectors |

IMU (Inertial Measurement Unit) | Legged robots, drones, mobile bases, humanoids | Linear acceleration + angular velocity in 3 or 6 axess |

Current Sensors | Low-cost actuators, soft robots, wearables | Motor current as torque proxy |

Capacitive Position Sensors | Precision end-effectors, micro-robotics | Linear displacement with high resolution |

REDUNDANCY IS STANDARD PRACTICE

Industrial and safety-critical robots routinely fuse multiple sensor types for the same joint, for example, an encoder for position combined with a torque sensor and a current monitor, to detect failures and maintain safe operation under sensor degradation.

Typical sensor technologies

Where Are They Used?

Proprioceptive sensing is present in virtually every articulated robotic system. The following industries and players represent the commercial frontier:

Automotive Manufacturing

Kuka, Fanuc, and ABB dominate this segment with high-cycle welding and assembly arms relying on sub-millimeter positional accuracy and torque feedback at thousands of cycles per day. Encoder and resolver-based joint sensing achieves positional repeatability below 0.02 mm in production-grade systems.

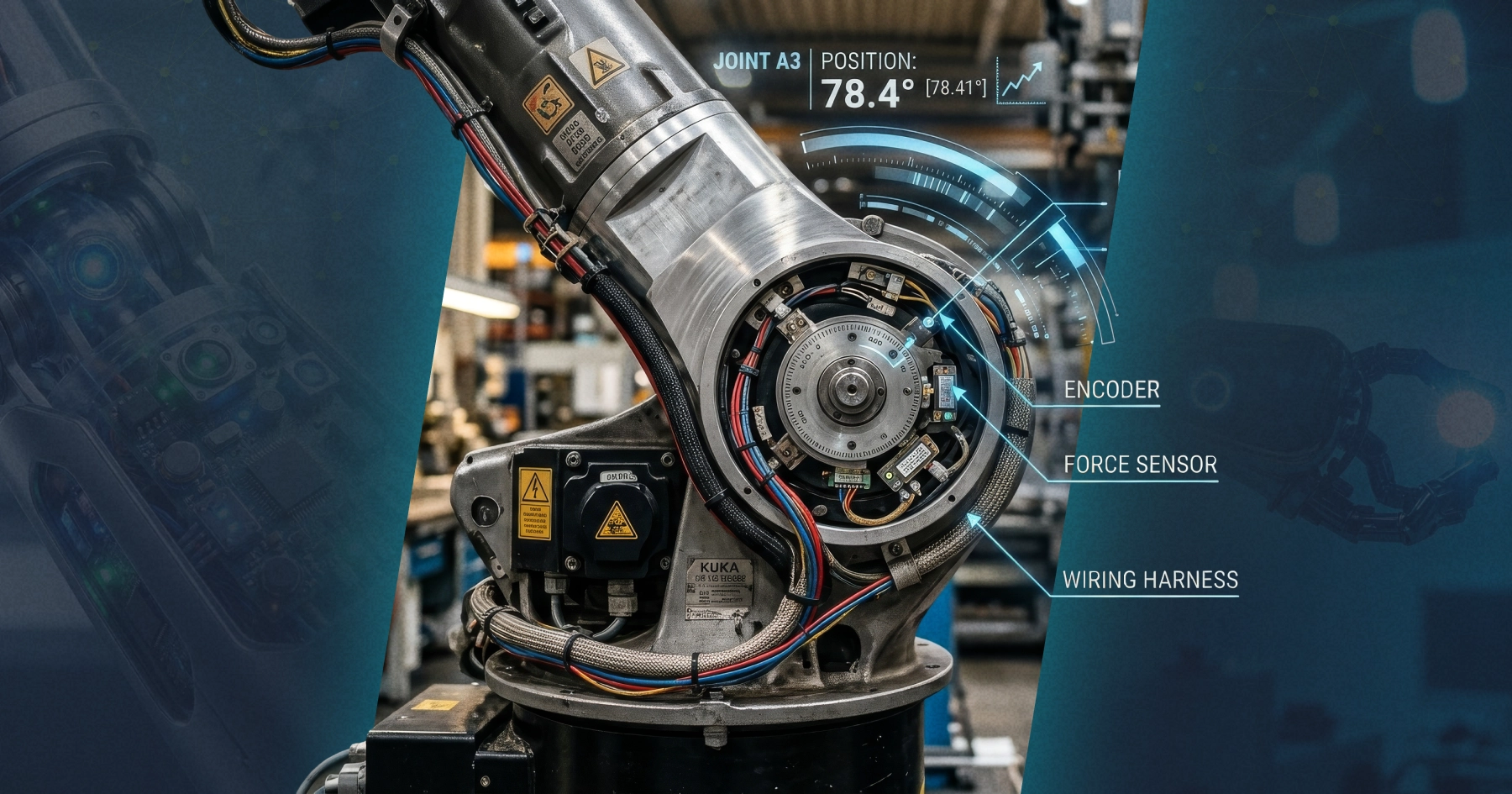

KUKA: German robotics manufacturer founded in 1898, KUKA is one of the world’s leading producers of industrial robots. Its six-axis arms are widely used in automotive body-in-white welding, assembly, and painting, and it counts BMW, Volkswagen, and Ford among its key customers.

KUKA systems engineering for the automotive industry

Fanuc: Japanese automation company and the global market leader in CNC systems and industrial robots, Fanuc is recognized for the reliability and precision of its yellow robot arms. Its robot lineup spans painting, welding, assembly, and palletizing across automotive and electronics manufacturing.

Fanuc robot arms

ABB: Swiss-Swedish multinational, ABB Robotics offers one of the broadest portfolios in industrial automation, covering welding, painting, material handling, and assembly. ABB is a major supplier to automotive OEMs and tier-one suppliers worldwide, and its YuMi cobot line extends its reach into collaborative applications.

ABB collaborative robot YuMi

Collaborative Robotics (Cobots)

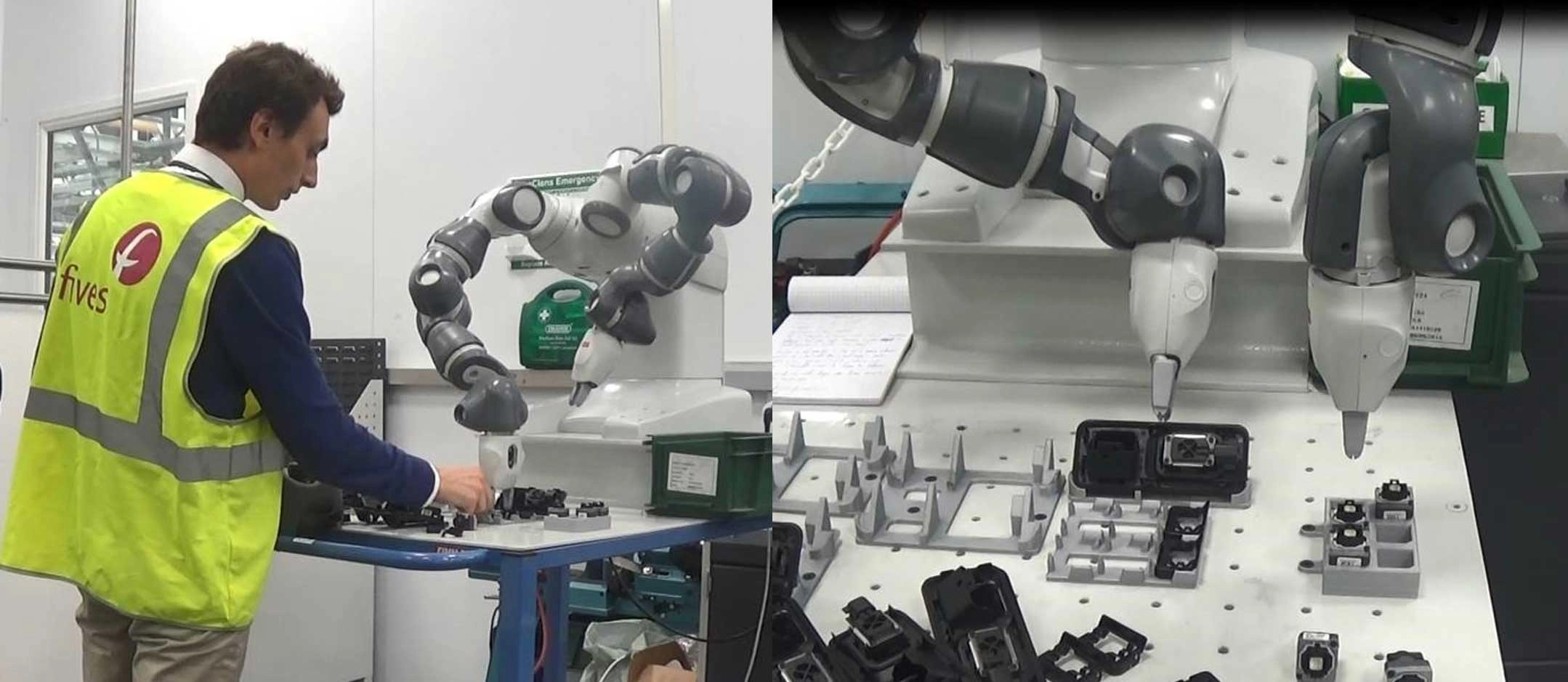

Universal Robots and Techman Robot have made joint torque sensing the defining feature of the cobot category. Real-time force limits halt motion on unexpected contact with humans, enabling ISO 10218 and TS 15066 compliance without safety cages. The cobot market is projected to exceed $12 billion by 2028.

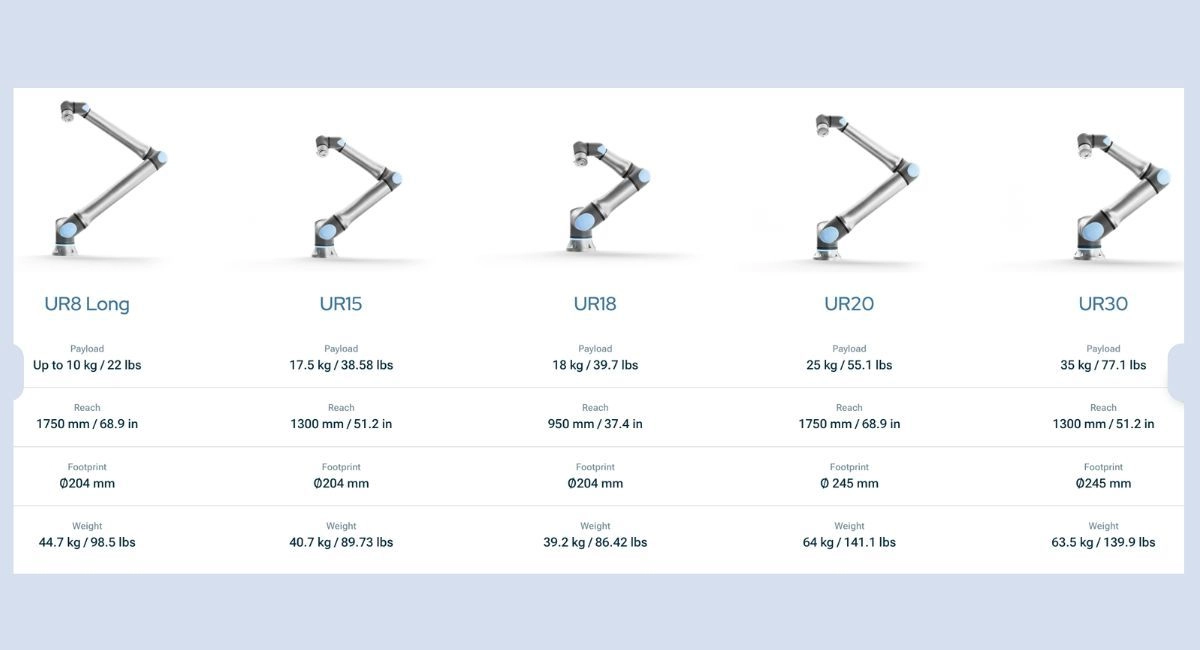

Universal Robots: Danish cobot pioneer founded in 2005, Universal Robots (UR) created the commercial collaborative robot category and remains its global market leader. Its UR-series arms are deployed across light assembly, electronics, food processing, and laboratory automation worldwide, with a focus on ease of programming and fast deployment.

Universal Robots UR-series arms

Techman Robot: Taiwanese cobot manufacturer Techman Robot differentiates its TM-series arms with built-in vision systems, integrating cameras and AI-based image recognition directly into the robot. This makes it well-suited for flexible inspection, pick-and-place, and assembly applications that require visual guidance without external camera setups.

Techman Robot AI Cobot Series

Surgical Robotics

Intuitive’s da Vinci system, Medtronic’s Hugo, and CMR Surgical’s Versius rely on sub-millimeter force-torque feedback for tremor filtration and tissue-safe manipulation during minimally invasive procedures. Proprioceptive resolution in surgical robots now reaches micro-Newton sensitivity.

Intuitive: US-based Intuitive is the dominant force in robotic-assisted surgery, with its da Vinci platform used in millions of minimally invasive procedures worldwide across urology, gynecology, and general surgery. The company holds a commanding market share and continues to expand its installed base and consumables revenue globally.

Intuitive DaVinci 5 – robotic surgery system

Medtronic: One of the world’s largest medical device companies, Medtronic entered the surgical robotics market with its Hugo robotic-assisted surgery system, targeting laparoscopic procedures and aiming to expand access to robotic surgery in cost-sensitive and emerging markets.

Medtronic's Hugo – a robotic-assisted surgery system

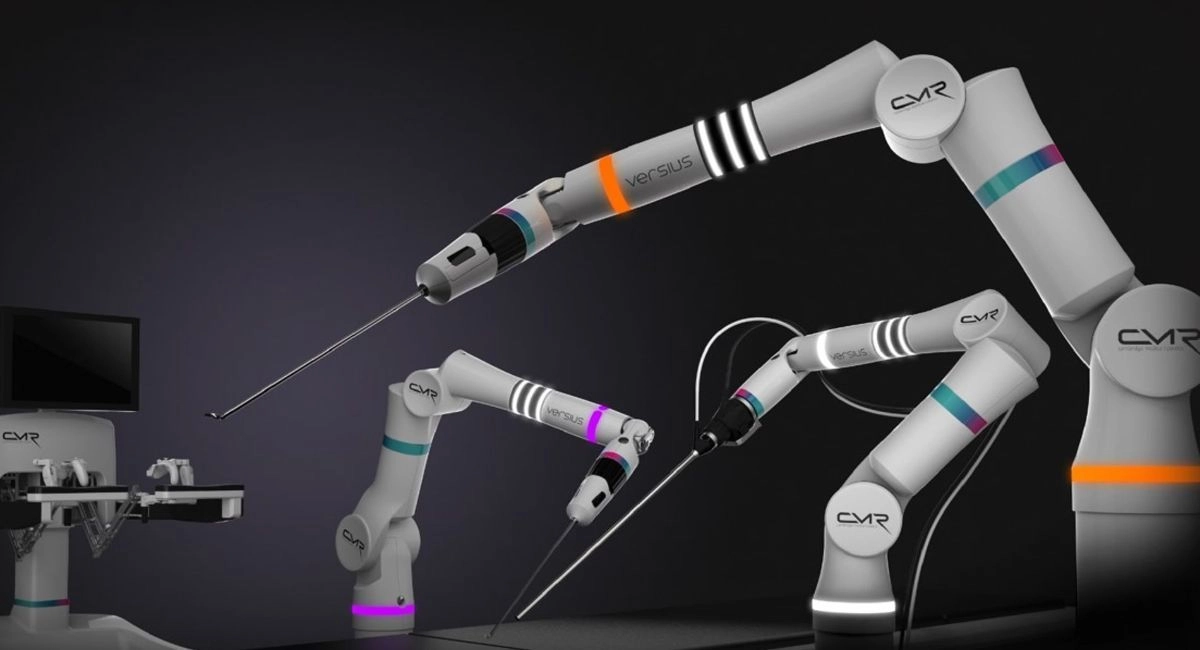

CMR Surgical: UK-based CMR Surgical developed the Versius modular surgical robotic system, designed for flexibility across a wide range of laparoscopic specialties. Its compact, portable form factor and subscription-based commercial model are positioned to make robotic surgery accessible to a broader range of hospitals.

CMR Surgical modular and portable surgical robot – Versius

Humanoid Robots

Boston Dynamics (Atlas), Agility Robotics (Digit), Figure, 1X Technologies, and Apptronik are deploying dense IMU and joint encoder arrays enabling dynamic balance and whole-body control across unstructured environments. The 2025–2026 period has seen the rapid commercialization of humanoid platforms for manufacturing and logistics.

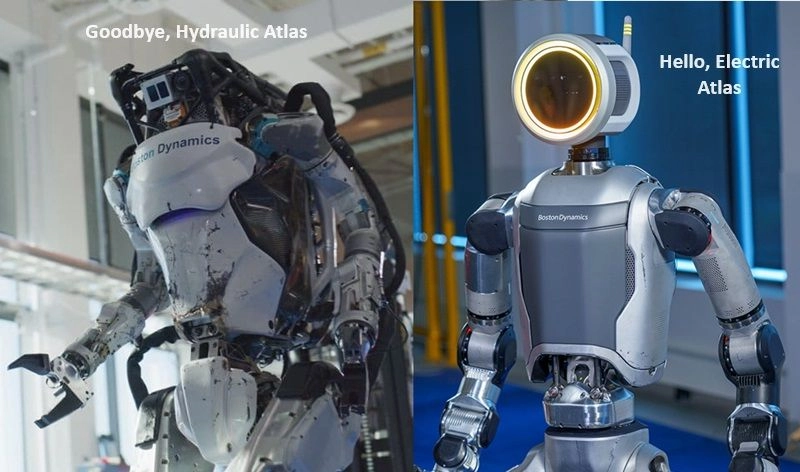

Boston Dynamics: US robotics company and pioneer of dynamic legged locomotion, Boston Dynamics is best known for its Atlas humanoid and Spot quadruped platforms. Acquired by Hyundai in 2021, the company is focused on commercializing its robots for industrial inspection, logistics, and construction applications.

Boston Dynamics humanoid robot and it's enhanced version

Agility Robotics: US company behind Digit, a bipedal humanoid designed specifically for warehouse and logistics environments. Agility Robotics has partnered with Amazon to deploy Digit in fulfillment centers, targeting repetitive material-handling tasks in spaces designed for humans.

Digit, a smart little robot for deliveries by Agility Robotics

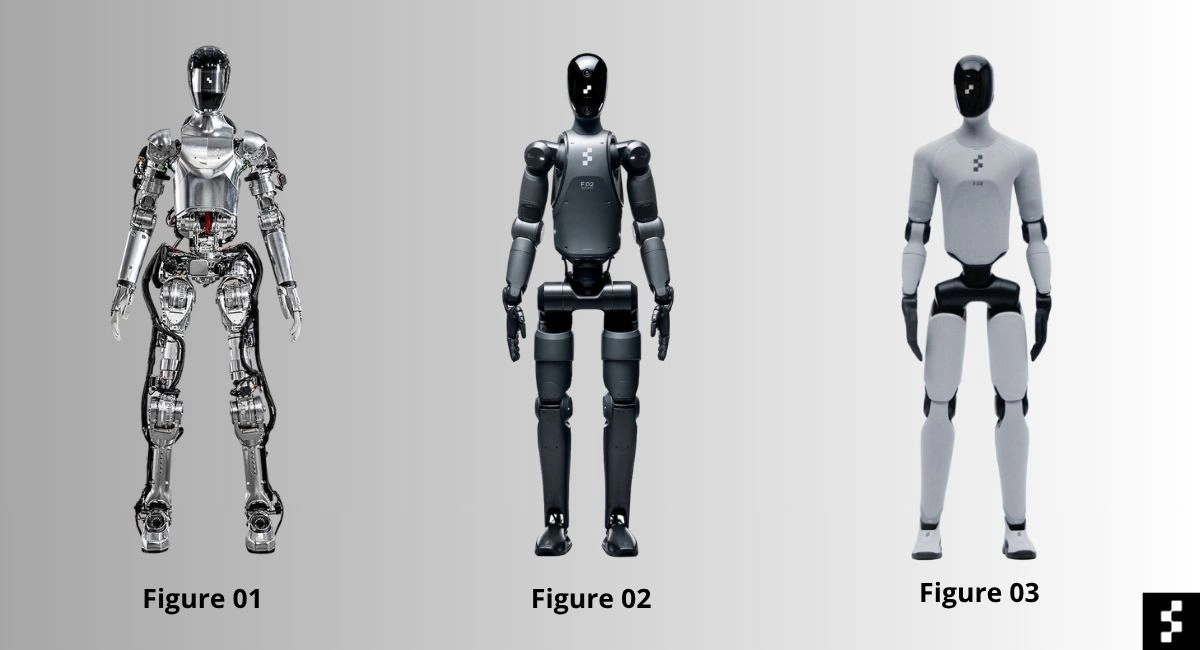

Figure: Well-funded US humanoid startup, Figure AI, is developing a general-purpose humanoid robot targeting manufacturing and logistics. The company has attracted investment from major technology firms and automotive OEMs and is pursuing rapid commercialization of its Figure 01 and 02 platforms.

Figure AI Humanoids

1X Technologies: Norwegian-American robotics company developing androids for service and industrial roles. Its EVE and NEO platforms are designed for safe co-working with humans, and the company is focused on scaling humanoid robot production for real-world labor applications.

NEO, Apptronik’s humanoid robot

Apptronik: Austin-based robotics company producing the Apollo humanoid robot, Apptronik targets manufacturing and logistics use cases with a full-body proprioceptive sensing stack. The company has partnerships with NASA and automotive manufacturers, and positions Apollo as a practical platform for near-term commercial deployment.

Apollo by Apptronik

Logistics & Warehouse Automation

Amazon Robotics, Symbotic, and Berkshire Grey deploy mobile and articulated arms that use position and force feedback for reliable pick-and-place across variable payload conditions, operating continuously in 24/7 fulfillment center environments.

Amazon Robotics: The internal robotics division of Amazon, Amazon Robotics operates one of the world’s largest fleets of autonomous mobile robots and articulated picking arms across its global fulfillment network. It develops and deploys proprietary systems, including the Kiva drive units and robotic picking arms like Sparrow and Cardinal.

Amazon Robotics operates articulated picking arms across its global fulfillment network

Symbotic: US-based warehouse automation company, Symbotic builds AI-powered robotic systems for large-scale retail distribution centers. Its platform integrates high-speed autonomous mobile robots with end-to-end inventory software, and the company has major contracts with Walmart and other large retailers.

Symbotic autonomous robot

Berkshire Grey: US robotics company specializing in AI-enabled robotic picking and sortation systems for e-commerce fulfillment, retail, and parcel logistics. Berkshire Grey’s platforms use vision and force-sensing to handle highly variable product assortments, reducing reliance on manual picking in high-throughput environments.

Berkshire Grey’s AI-enabled robotic systems

Exoskeletons & Rehabilitation

Ekso Bionics and ReWalk use proprioceptive feedback to regulate assistive torque in real time, adapting to the user’s gait and muscle engagement during neurological rehabilitation and industrial augmentation.

Ekso Bionics: US-based Ekso Bionics produces wearable exoskeletons for medical rehabilitation and industrial worker support. Its EksoNR device is FDA-cleared for use in stroke and spinal cord injury rehabilitation programs, and its industrial line targets manufacturing workers performing overhead or physically demanding tasks.

Ekso Bionics Unveils EVO, a light weight exoskeleton for construction

ReWalk Robotics: Israeli-American company ReWalk develops FDA-cleared powered exoskeletons that enable people with lower-limb paralysis to stand and walk. Its systems are used in rehabilitation clinics and by individuals for personal mobility, making it one of the few exoskeleton companies with a cleared personal-use product.

ReWalk Robotics exoskeleton for people with lower-limb disabilities

BREAKTHROUGH COMPANIES TO WATCH

Apptronik (USA), full-body proprioceptive sensing on humanoid Apollo for manufacturing. Wandercraft (France), an exoskeleton startup with advanced gait proprioception for neurological rehab. Gecko Robotics (USA), inspection robots with high-sensitivity force sensing for confined industrial environments. FieldAI (USA), mobile robots fusing IMU and joint state for GPS-denied autonomous operation in construction sites.

Why Does It Matter?

Without proprioception, a robot is a ballistic projectile. With it, the robot becomes a partner.

Proprioceptive sensing is the part of robotics that organizations consistently underestimate until something goes wrong. The moment a robot stops knowing where it is, everything built on top of it collapses: the control loop, the safety guarantees, the ML layer. It is not a component; it is the substrate.

The strategic value of proprioceptive sensing operates on four dimensions:

- Control precision. Closed-loop feedback from encoders and torque sensors allows controllers to correct errors at hundreds of hertz. Without this, positional drift accumulates, and task quality degrades rapidly.

- Safety. Force-torque sensing is the primary mechanism by which collaborative robots detect unexpected contact with humans and halt within milliseconds; without it, ISO 10218 and TS 15066 safety standards cannot be met.

- Adaptability. Proprioceptive signals allow robots to detect load changes, tool wear, surface compliance variations, and structural deformations, adapting in real time rather than failing on unexpected conditions.

- Predictive maintenance. Trends in motor current, vibration signatures, and joint friction are early indicators of mechanical wear. Predictive maintenance programs built on these signals reduce unplanned downtime significantly in high-cycle deployments.

How Is the Data Processed?

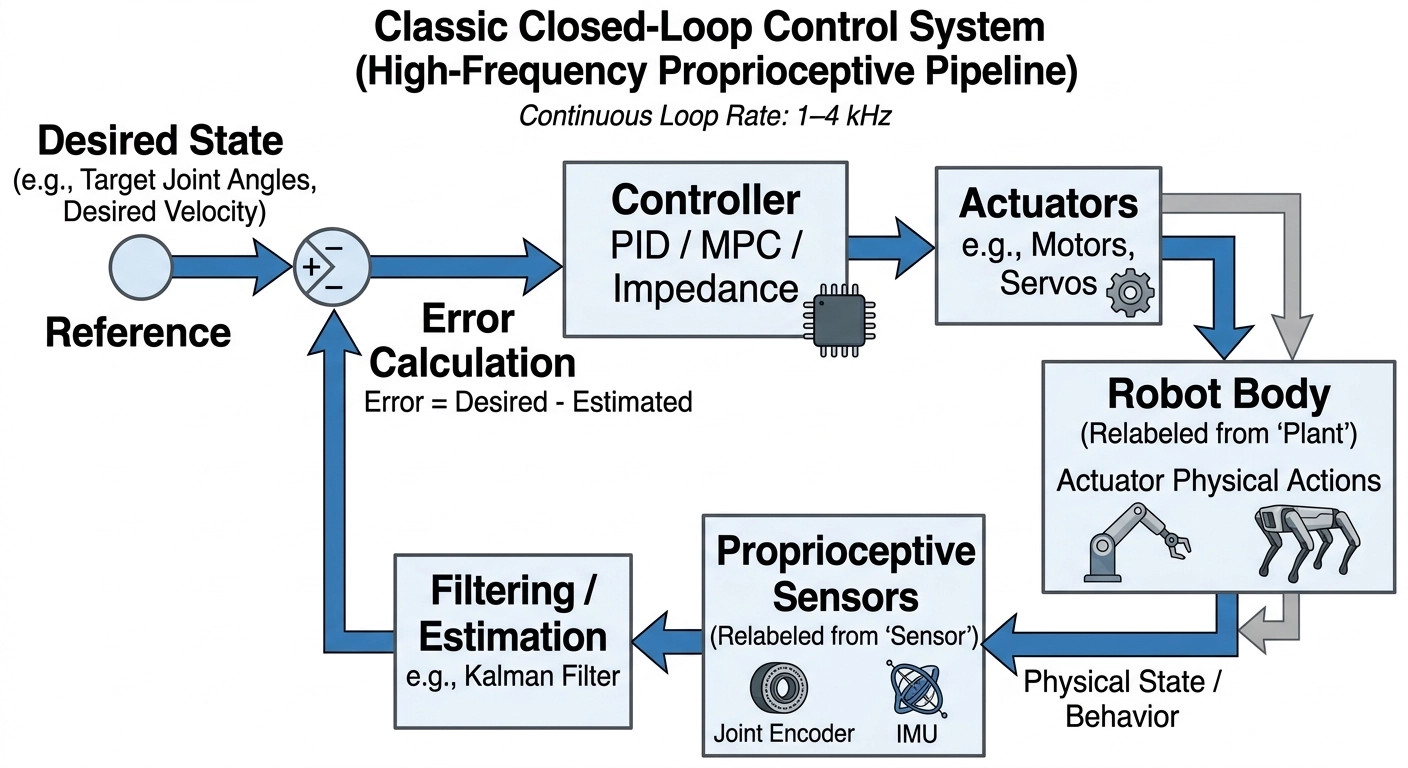

Proprioceptive data moves through a well-defined pipeline from raw sensor output to actionable control commands:

Step 1, Acquisition: Raw electrical signals (voltage, current, pulse trains) are captured by ADCs or digital interfaces (SPI, I²C, EtherCAT) at rates from 1 kHz to 20 kHz, depending on application demands.

Step 2, Filtering: Noise is a fundamental challenge. Extended Kalman Filters (EKF), complementary filters, and low-pass filters clean the signal, fuse redundant inputs (e.g., encoder + IMU), and reject mechanical vibration artifacts.

Step 3, State Estimation: Filtered signals are passed through forward kinematics or dynamic models to compute the full state: end-effector pose, contact forces, and link velocities. This is the live model of the robot's own body.

Step 4, Control: The estimated state feeds a controller (PID, Model Predictive Control, impedance control) that computes corrective commands to minimize error against the reference trajectory.

Step 5, Actuation & Feedback: Commands are sent to motor drivers; the resulting motion is immediately re-sensed, closing the loop. Modern robots run this cycle at 1–4 kHz continuously.

How proprioceptive sensors process data

LATENCY BUDGET

In high-bandwidth systems such as legged locomotion controllers, the total latency from sensor read to actuator command must remain below 1–2 milliseconds. This requires real-time operating systems (RTOS), dedicated FPGA co-processors, or on-chip sensor processing, not general-purpose compute.

Where Does Machine Learning Add Value?

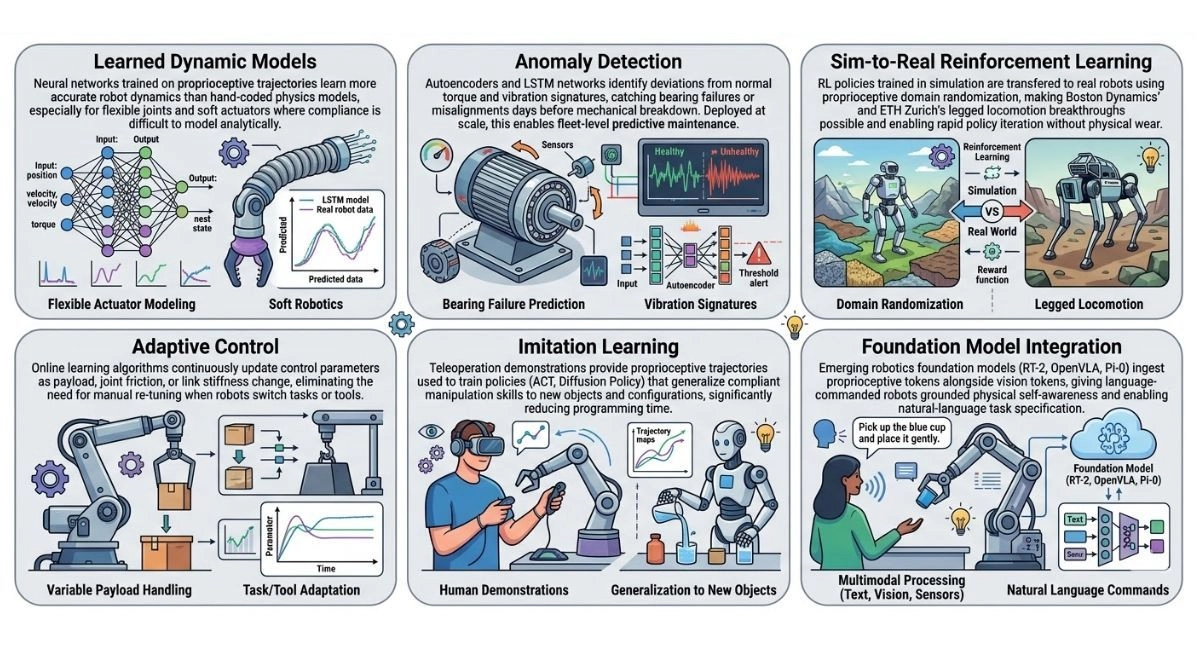

Classical control theory is powerful but rigid. ML is reshaping what proprioceptive data can do across six primary areas:

-

Learned dynamic models. Neural networks trained on proprioceptive trajectories learn more accurate robot dynamics than hand-coded physics models, especially for flexible joints and soft actuators where compliance is difficult to model analytically.

-

Anomaly detection. Autoencoders and LSTM networks identify deviations from normal torque and vibration signatures, catching bearing failures or misalignments days before mechanical breakdown. Deployed at scale, this enables fleet-level predictive maintenance.

-

Sim-to-real reinforcement learning. RL policies trained in simulation are transferred to real robots using proprioceptive domain randomization, making Boston Dynamics' and ETH Zurich's legged locomotion breakthroughs possible and enabling rapid policy iteration without physical wear.

-

Adaptive control. Online learning algorithms continuously update control parameters as payload, joint friction, or link stiffness change, eliminating the need for manual re-tuning when robots switch tasks or tools.

-

Imitation learning. Teleoperation demonstrations provide proprioceptive trajectories for training policies (ACT, Diffusion Policy) that generalize compliant manipulation skills to new objects and configurations, significantly reducing programming time.

-

Foundation model integration. Emerging robotics foundation models (RT-2, OpenVLA, Pi-0) ingest proprioceptive tokens alongside vision tokens, giving language-commanded robots grounded physical self-awareness and enabling natural-language task specification.

Areas of proprioceptive data where ML adds value

The convergence of dense proprioceptive data streams with transformer architectures is arguably the most active frontier in applied robotics AI as of 2026.

Real-World Constraints

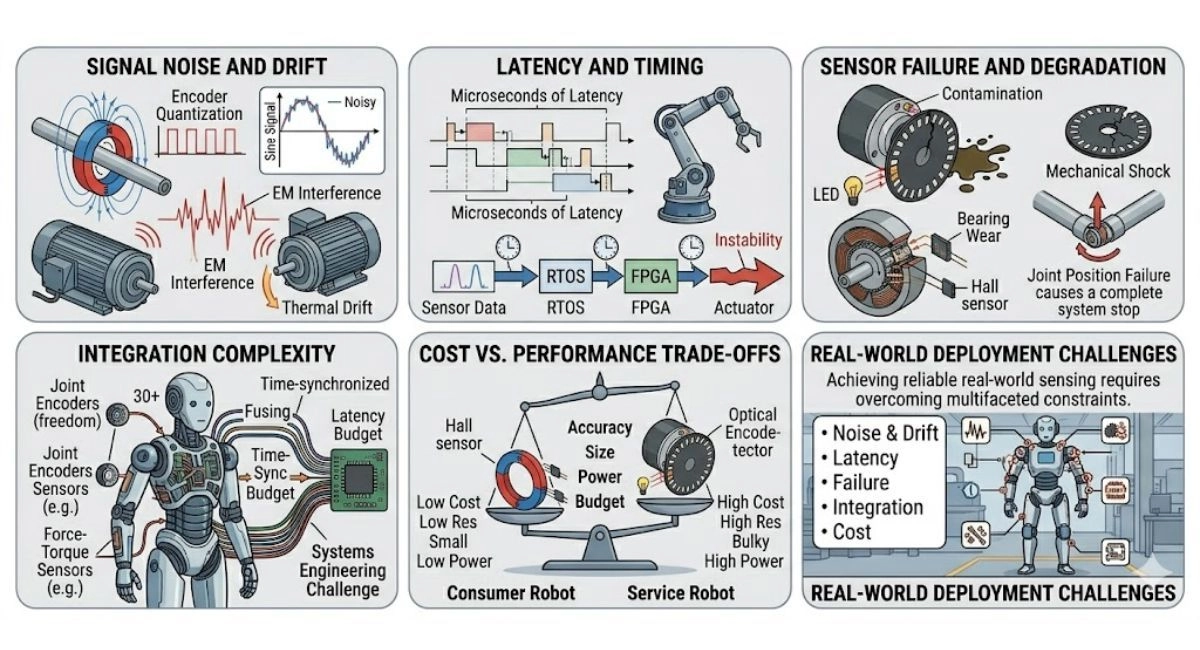

Despite decades of development, proprioceptive sensing in real deployments faces persistent practical obstacles:

-

Signal noise and drift. Encoder quantization, electromagnetic interference from motors, and thermal drift in analog circuits all degrade signal quality, especially in high-speed, high-current industrial environments.

-

Latency and timing. Even microseconds of latency in the sensing-to-control loop can cause instability in high-bandwidth systems. Real-time operating systems and dedicated FPGAs are often required.

-

Sensor failure and degradation. Encoders fail due to contamination, mechanical shock, or bearing wear. A single point of failure in a joint position sensor can render an entire arm unsafe or non-functional.

-

Integration complexity. Proprioceptive data from dozens of joints must be fused, time-synchronized, and interpreted under strict latency budgets, a significant systems engineering challenge in humanoid robots with 30+ degrees of freedom.

-

Cost vs. performance trade-offs. High-resolution optical encoders and multi-axis force-torque sensors add high cost and weight. Sensor selection is always a compromise among accuracy, size, power, and budget, particularly acute in cost-sensitive consumer or service-robot segments.

Real-world constraints of proprioceptive data

A Mini Scenario

Cobot Installs a Bolt with Force Feedback, Automotive Assembly, 2025

SCENARIO

A Universal Robots UR10e cobot is deployed at a BMW assembly station in Leipzig to install torque-sensitive bolts on an engine block. The task requires precisely 22 Nm of torque; under-torquing causes safety failures; over-torquing damages the threads.

-

Proprioceptive stack at work: The robot's wrist-mounted 6-axis force-torque sensor streams real-time torque data at 2 kHz. An encoder on each of the 6 joints tracks position with 0.01° resolution. An IMU on the base detects vibrations from the moving assembly line.

-

Control loop: As the bit engages the bolt, impedance control modulates arm stiffness, compliant enough to self-center on the bolt head, stiff enough to apply torque accurately. The torque sensor triggers a soft stop at exactly 22 Nm regardless of positional error.

-

ML layer: An LSTM network monitors the torque signature across thousands of cycles. When it detects an anomalous torque ramp characteristic of a cross-threaded bolt, it flags the unit for human inspection before the next station, preventing a defect that classical threshold logic would have missed.

-

Outcome: Defect rate from torque errors reduced by 73% vs. the previous pneumatic torque-wrench process. Zero missed cross-thread events in 6 months of deployment.

Universal Robots UR10e cobot

The One-Sentence Summary

Proprioceptive sensors are the irreducible foundation of robotic autonomy, the layer of self-knowledge upon which all control, safety, adaptation, and intelligent behavior must be built.

What's Coming Next?

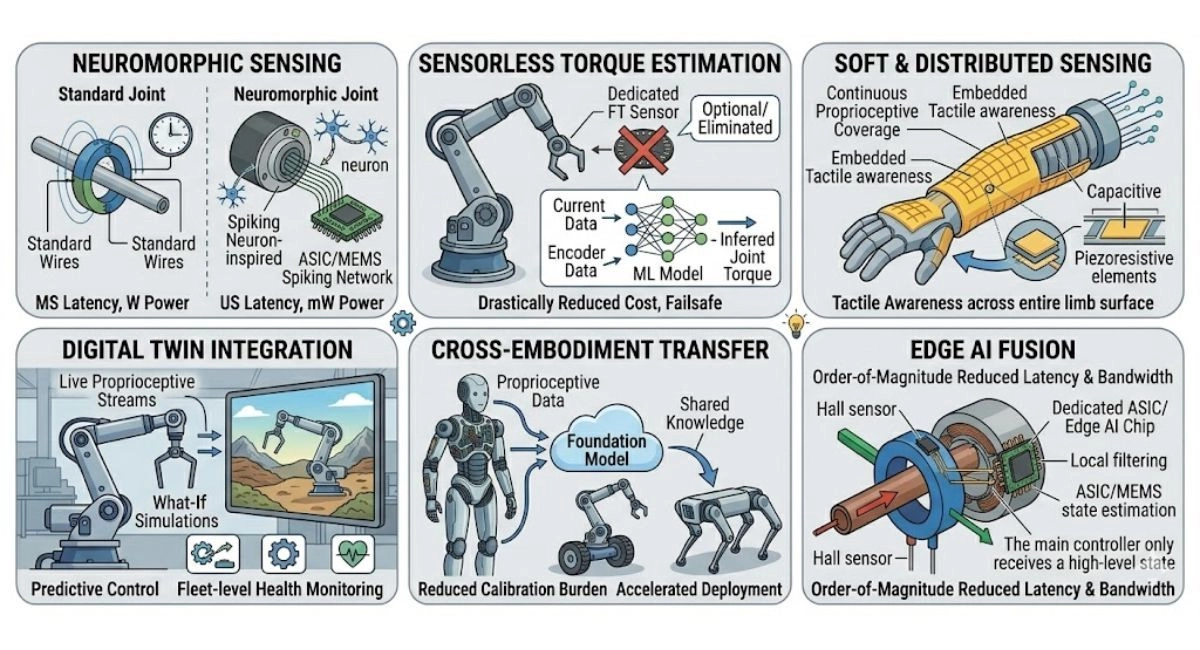

The proprioceptive sensing landscape is undergoing rapid transformation across hardware, software, and system architecture dimensions:

-

Neuromorphic sensing. Event-driven sensor architectures inspired by biological neurons promise microsecond-latency proprioceptive feedback with orders-of-magnitude lower power consumption, making always-on joint monitoring viable in battery-powered systems.

-

Sensorless torque estimation. ML models that infer joint torques purely from motor current and encoder data are making dedicated force-torque sensors optional in certain cobot applications, dramatically reducing hardware costs and eliminating a potential mechanical failure point.

-

Soft & distributed sensing. Stretchable capacitive and piezoresistive sensor skins embedded in soft robot bodies replace discrete point sensors with continuous proprioceptive coverage, enabling compliant manipulation at scale and tactile awareness across entire limb surfaces.

-

Digital twin integration. Live proprioceptive streams feed digital twin models that run in parallel with the physical robot, enabling predictive control, what-if simulations, and fleet-level health monitoring from a single sensor stream.

-

Cross-embodiment transfer. Foundation models trained on proprioceptive data from one robot morphology are beginning to transfer useful priors to entirely different robot morphologies, significantly reducing the per-platform calibration burden and accelerating new robot deployment timelines.

-

Edge AI fusion. Dedicated ASICs and edge AI chips co-located with sensors perform Kalman filtering and state estimation locally, reducing the latency and bandwidth burden on the central controller by an order of magnitude.

What’s coming next in proprioceptive sensing

Broader Industry Challenges

-

Standardization gaps. There is no universal protocol for proprioceptive data. EtherCAT, CANopen, and proprietary bus architectures fragment the ecosystem, creating significant integration overhead when combining components from different vendors. Efforts by the Open Source Robotics Foundation and ROS 2 are progressing, but hardware-level standardization lags software.

-

Sim-to-real gap in proprioception. Simulated proprioceptive signals do not perfectly replicate real sensor noise, quantization artifacts, and mechanical compliance. This gap remains a key bottleneck for deploying RL-trained policies without extensive real-world fine-tuning, though domain randomization techniques are narrowing it.

-

Cybersecurity exposure. As robots become networked and their sensor streams feed cloud-based analytics, proprioceptive data becomes a cybersecurity surface. Manipulated sensor streams could be used to cause robots to deviate from safe operation, a challenge the industry is only beginning to address systematically.

-

Talent scarcity. The intersection of mechatronics, real-time systems, control theory, and applied ML required to work at the proprioceptive sensing frontier is extremely specialized. Supply is far below demand, particularly in Europe and Southeast Asia, where robotics adoption is accelerating fastest.

Closing Thoughts

Proprioceptive sensing sits at an inflection point. For decades, it was a mature, well-understood domain, with encoders and IMUs doing their jobs quietly inside industrial cages. The rise of collaborative robots, humanoids, surgical systems, and AI-native control architectures has transformed it into one of the most active areas of robotics innovation.

The signals have not changed, position, velocity, force, torque, but what we do with them has changed dramatically. Machine learning is unlocking new capabilities from existing hardware. New sensor modalities are extending proprioception to soft, distributed, and neuromorphic systems. And the integration of proprioceptive tokens into large-scale robotics foundation models is beginning to blur the line between sensing and cognition.

For organizations deploying, building, or investing in robotic systems, the strategic message is clear: proprioceptive sensing is not an implementation detail. It is a core competency and a source of durable competitive advantage.

UP NEXT IN THIS SERIES

The next article will examine Exteroceptive Sensors, how robots perceive their external environment through cameras, LiDAR, ultrasonic, and radar systems, and how sensor fusion bridges the gap between proprioception and world modeling.